Autonomous cars, or “self-driving cars” in more layman terms, are on the horizon. Within the next generation, cars with no drivers, controlled exclusively by computers and complex traffic algorithms, could very well become the norm. In concept and theory, this could reduce or even eliminate fatal collisions, and severely reduce the amount of injury accidents or even fender benders to near zero.

However, thought must be given to how accidents may occur, even with an autonomous vehicle, and who, ultimately, the liability lies with.

What does the soon-to-be autonomous future mean for things like insurance and liability?

To understand what exactly defines an autonomous vehicle, first we must understand what defines the autonomy of a vehicle. According to the NHTSA in the United States, there are six levels of autonomy. These are:

| Level 0: | The driver performs all driving tasks, without any mechanical or computer assists |

| Level 1: | Vehicle controlled by driver, but driving assist features such as power steering and ABS brakes are equipped |

| Level 2: | Vehicle controlled by driver, but minor automation such as cruise control and lane-keeping alerts equipped |

| Level 3: | Driver may opt for vehicle to assume control, but must remain alert and aware to take over should automation cease or fail |

| Level 4: | Driver is optional, vehicle is capable of self-control under specific operating conditions. Driver, if present, may assume control at any time |

| Level 5: | Fully automated. Vehicle is autonomous in all conditions and situations. Driver is not required, and control surfaces such as wheel and pedals may not exist |

As it stands, in most parts of the world, vehicles are currently between 0 and 2 on the scale. Some vehicles are at Level 3, such as Tesla EV’s, where there is an auto-pilot feature. However, even in the vehicle manuals of these cars, it is explicitly stated that the driver is responsible to take over if needed and must remain alert and aware.

Many law experts have mixed feelings. To get some clarity, we asked the auto accident lawyers at Schultz & Myers what their thoughts on it were. In short, anything up to and including level 4 autonomy has the option of a human intercept of vehicle control, and thus, in the absolute definition of the law, liability still lies with the owner of the vehicle.

In today’s world, this means even in a car with partial automation, the insured is ultimately the liable party. However, once we, as society, reach level 5 automation, where there are possibly no control surfaces to take over the vehicle, liability becomes a far more complex concept.

Photo credit: Pexels

If two autonomous cars get into an accident, who is at fault?

To be frank, no legislation or insurance exists yet that covers fully autonomous vehicles in all aspects of liability. There also has to be a direct distinction between the terms “self-driving” and “autonomous.” One does not equal the other. Self-driving is any vehicle level 4 and below that still has human operable control surfaces. Fully autonomous is only defined in level 5.

So what happens if two autonomous level 5 vehicles smash into each other, damaging both vehicles, causing personal injury and property damage?

In the UK, a groundbreaking law was introduced into the Public General Acts, 2018 c. 18, Part 1 Section 2, that states that in the case of an automated vehicle a) driving itself, b) is insured, and c) causes property damage or damage to persons inside the vehicle or others, the INSURER is liable. In cases where the autonomous vehicle is a) driving itself b) is not insured, and c) causes property damage or damage to persons inside the vehicle or others, the OWNER of the vehicle is liable.

Further, in Public General Acts, 2018 c. 18, Part 1 Section 4, especially subsection 1, the insurer can be limited to partial liability or no liability if the vehicle’s automation software has been tampered with, changed outside of strict protocols, or has not been updated due to owner negligence.

However, again, this PGA assumes that a human at one point or another will own the vehicle and be responsible for its upkeep. However, what happens if, in 50 or 60 years, no human upkeep is required and everything is fully automated?

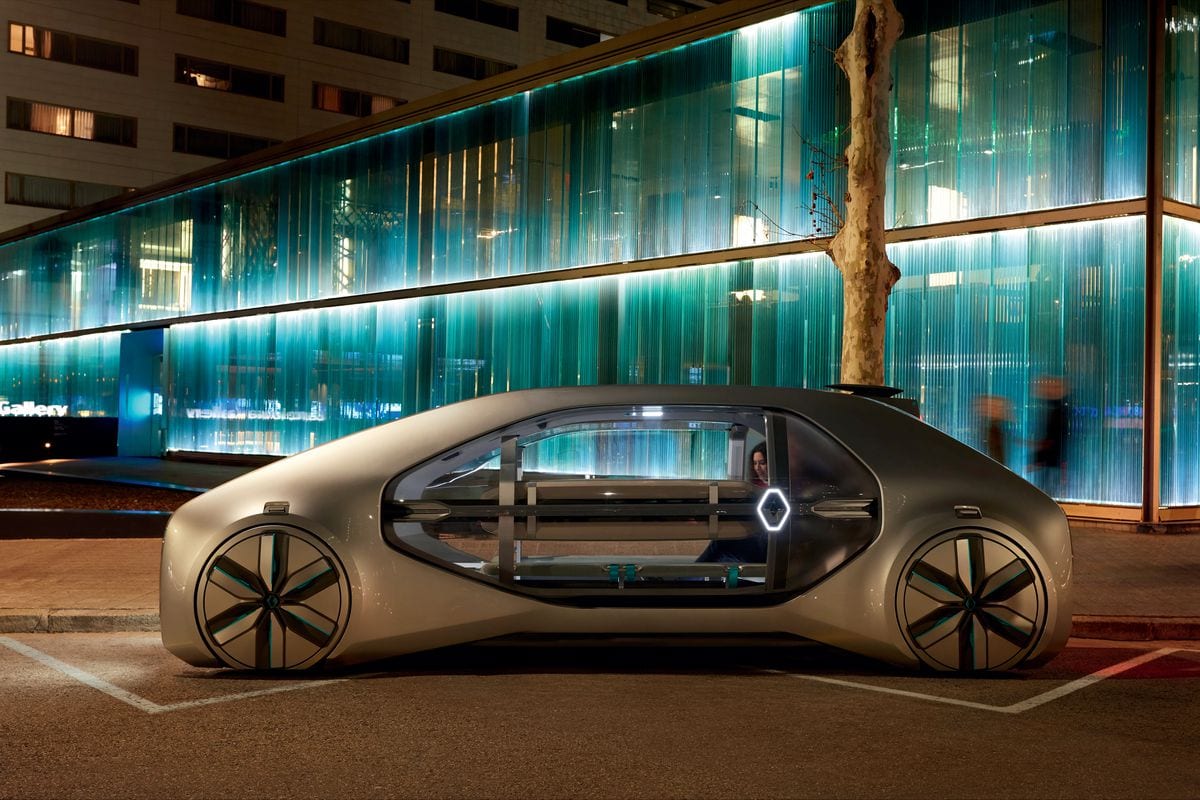

Photo credit: Renault

What is the legislation of the automotive future likely to look like?

Imagine it’s 2081, exactly 60 years in the future. The roadways are populated by autonomous vehicles, inter-city travel is performed by hyperloop systems, space tourism is finally affordable, and traffic is controlled by powerful deep learning computer systems that constantly adapt to traffic a million times per second. We, as society, have surpassed the thought of vehicle ownership, as there is no reason to own your own vehicle when you can press a button by your door, and by the time you make it to the sidewalk, an autonomous vehicle pulls up to the curb.

Of course, this is a very idealistic outlook, yet will require new laws and new thoughts about liability and insurance. In the most basic sense, the owner of the vehicle would still assume liability. The onus of ownership, however, will have most likely passed to local government, or to the manufacturer of the vehicles, and there will have to be proscribed laws and statutes that govern that liability.

It is extremely difficult to even guess at what that liability structure would entail, but ultimately, it still returns to the human factor. Someone, somewhere, told someone else what to do, what to build, what to program into the vehicle’s software. So while the person inside the vehicle may not be liable, someone will be.

A present time example

This has arguably been demonstrated in present times, in the case of a fatal collision between an Uber autonomous vehicle prototype with an emergency driver onboard, and Elaine Herzberg, a pedestrian pushing a bike across a road at night. The incident in question occurred on March 18, 2018, at 9:58 PM MST in Tempe, Arizona.

Without going into excessive detail, the Uber test vehicle was in autonomous mode for testing, sanctioned by the State of Arizona, when it did not detect Ms Herzberg, who had stepped onto the road from behind vehicles, not in a marked crosswalk, in poor lighting, and who was wearing dark clothing. The Uber, a prototype built on the Volvo XC90 SUV, did not register the bicycle or Ms. Herzberg was an actual object of concern until it was approximately 1.3 seconds from impact, despite detecting both and trying to figure out if it was of concern 6 seconds before impact. The human safety driver, Ms Rafaela Vasquez, also did not see Ms Herzberg until about the same time, as records show she was distracted by video on her smartphone.

The autonomous control software commanded emergency braking, yet none occurred until Ms Vasquez applied full compression on the brake pedal a few moments, but less than a second, after the impact, which occurred at 39 MPH (63 KPH). The Uber prototype was considered a prototype for level 4 automation with the ultimate goal of level 5, but was realistically operating as an advanced level 3 vehicle.

As a result of the incident, the distribution of liability quite literally had to be assigned in court as it was extremely complex and had a surprising twist. It was ultimately shared between Ms Vasquez as the safety driver, Uber as the company testing the autonomous vehicle, the State of Arizona as the sanctioning authority of the testing, Ms Herzberg for failing to use a marked crosswalk 360 feet from the point of impact… and the Volvo XC90 prototype car, as an identified non-human entity under law.

The precedent that this sets for a fully automated future is unknown. However, if the vehicle itself can be held liable as a recognized non-human entity under law, the question then becomes: Which human, if any, becomes ultimately liable?

The answer lies in the autonomous future.

Thanks for sharing this information.